“Kubernetes is an open-source system for automating deployment, scaling and management of containerized applications” This is the official definition of Kubernetes according to https://www.kubernetes.io . Understanding Kubernetes the first time you encounter it is not easy so lets start by reviewing some of the terminology.

What is a Container?

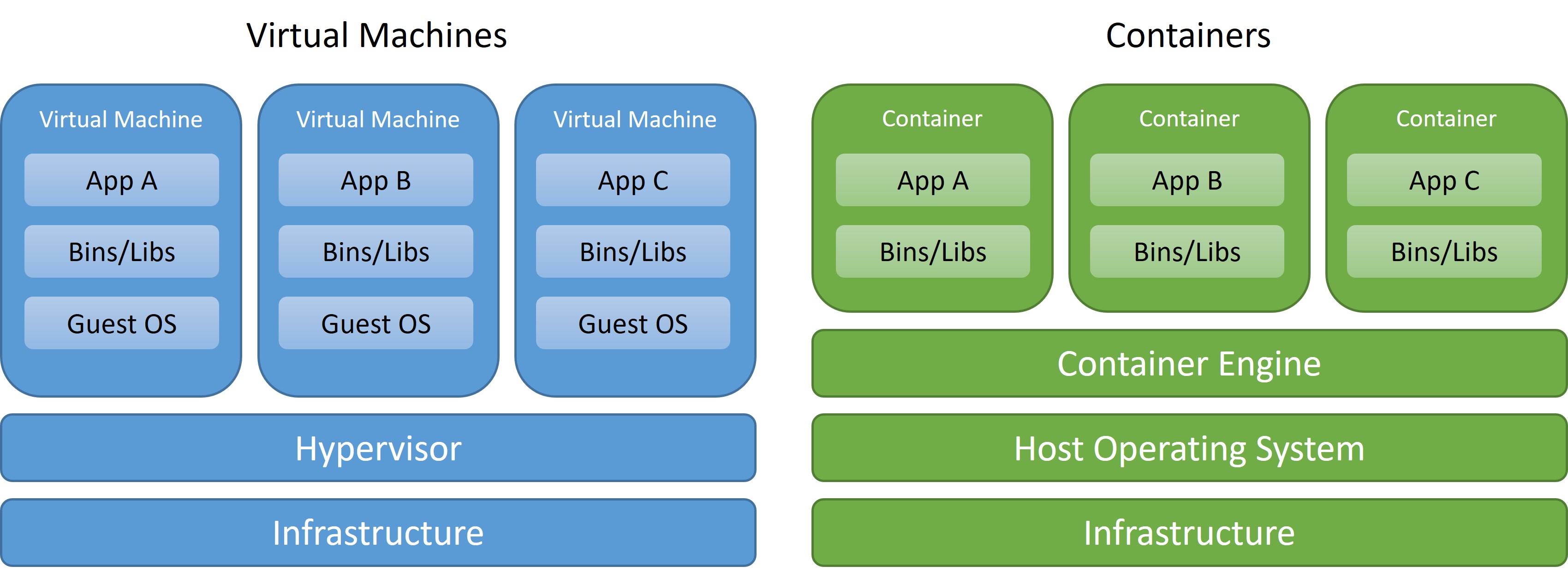

In simple terms a Container is a virtualization technology that allows you to package and isolate applications for deployment. It is important to understand that a container is not a virtual machine and there are a lot of differences between the two.

What's the difference between Containers and Virtual Machines?

The big difference is that a Virtual Machine runs on top of an Hypervisor allowing the virtualization of the whole machine down to the hardware level, while Containers only virtualize the software layer above the operating system level, this means containers share a lot with the Host Operating System like you can see in the following image.

Here's are some of the many benefits to using Containers:

- Less overhead

- Increased Portability

- Consistent Operation

- Greater Efficiency

- Better application development

And here are some of the drawbacks to using of this technology:

- It is not the correct tool for all tasks

- Weaker Isolation

- Limited Tools

- Shared Host Exploits

What is Kubernetes?

We started this post with a definition of Kubernetes, but in summary it is a orchestration tool for containers, meaning we can deploy containerized applications and scale them automatically without human intervention. There are many implementations of Kubernetes (Also know as k8s) but one of the simplest to deploy and manage is Microk8s.

Microk8s is a Kubernetes deployment that runs entirely on your workstation and is developed by Ubuntu, it can be used for development or production and flexible enough to run on different CPU architectures like ARM, and Intel.

Kubernetes Terminology

Before proceeding to the installation of microk8s, it is important to know some terminology related to Kubernetes. This is not an exhaustive glossary of Kubernetes terminology but it is important to know at least the following:

- Kubernetes Cluster: Is a Cluster of Kubernetes Nodes

- Kubernetes Nodes: Are the Physical or Virtual Machines that form the Cluster and contains the Kubernetes Workload and different Kubernetes Components, like the API, Scheduler, etc.

- Deployment: The deployment basically is a declarative language that tells Kubernetes how to create or modify a Pod that holds a containerized application. This declarative language is in a YAML file and is used by Kubernetes to perform the API calls to create or update different resources.

- Pods: The Kubernetes Pod is an abstraction that represents a group of resources that form the application containers. This resources can be one or more of containerized applications, volumes, etc.

- Services: A Service in Kubernetes is method for exposing a network application that is running on one or more pods in your cluster.

- Replica Set: A Replica Set is a Kubernetes Object to maintain a stable set of replicated pods running within a cluster at any given time.

- Endpoint: Is a resource used to track the IP addresses of an object or pod that was dynamically assigned.

- Ingress: Is an object that manages external access to HTTP and HTTPS services in a Cluster

How Containerized Applications are exposed in a Kubernetes Cluster?

Before we can talk about how the applications are exposed to the rest of the world we need to know some basics regarding the networking of the Kubernetes Cluster.

- Node IP: The node IP is the ip address of the node where the control plane lives and is also called referred to as the master node, it's where components like API, Scheduler, etc. are.

- Cluster IP: Is a virtual ip , which is a Fake Ip Network that connects the Kubernetes Services.

- Pod IP: Is the IP address of the Pod. Pods under the same Service can communicate with each other directly, but Pods under different Services need to rely on a cluster ip, but when Pods need to communicate with other pods or outside the cluster then the Node Ip is used.

The three different networks can be explained in high level detail in the following image

It is important to mention that each pod has a unique IP address but those are not exposed outside of the cluster unless a service is involved, services can be exposed using different a methods like:

- Cluster IP: This method exposes the service on an internal IP in the Cluster. This method makes the Service only reachable from within the cluster but you can expose the service to the public with an Ingress or Gateway API and External IPs, usually the use of external IP in simple environments is matched to the NodeIP, the disadvantage is the port can’t be reutilized for different apps.

- Node Port: This method exposes the service on the same port of each selected Node in the cluster using NAT to the Node IP, to avoid problems with port assignments Kubernetes assigns a random port to the service, you will access your application using the NodeIP but on a totally random port that can change every time the service is restarted.

- Load Balancer: Creates an External Load Balancer that assigns a different Ip address to the service, with this method you can reuse ports for different applications inside your cluster.

How to Install Microk8s?

Microk8s is production ready and it is recommended to install a cluster with different nodes, for a more robust platform architecture. In this post we will only install one node, but adding new nodes is relatively simple, the documentation could be found here.

The bare minimum requirements for a microk8s installation is:

- Ubuntu22.02 LTS, 20.04 LTS, 18.04 LTS or 16.04 LTS as the Host operating system

- Recommended 20G of disk space and 4G of memory

- Internet Connectivity

We are not going to cover the installation of the underlying OS but in this example we will use Ubuntu 22.04 LTS so once the OS is installed we have to update and upgrade our system:

sudo apt update && sudo apt upgrade –y

After upgrading the OS we can install microk8s with:

sudo snap install microk8s –classic

Next you need to join your user to the microk8s group created by the installation with the following commands:

sudo usermod –a –G microk8s $USER

sudo chown –f –R $USER ~/.kube

su – $USER

Let’s Check the status of Microk8s with:

microk8s status

If the status is “microk8s is running” then, Congratulations! you installed Microk8s correctly.

Installing Microk8s Addons

Microk8s is flexible enough that you can increase functionality by installing different add-ons, these add-ons have a specific purpose and can be installed from the Core Repository or from the Community Repository.

The installation of add-ons is really simple with the following command:

microk8s enable <add-ons name>

You can find a complete list of the add-ons by following this external link: https://microk8s.io/docs/addons#heading—list

At this point we are going to install the following plugins:

- dns: This plugin deploys CoreDNS in order to supply address resolution services to Kubernetes, you can find more information here: https://microk8s.io/docs/addon-dns

- dashboard: The standard Kubernetes Dashboard, with this dashboard you can keep track of the activity and resource use of Microk8s, you can find more info here: https://microk8s.io/docs/addon-dashboard

- helm3: Add-on to install Helm3 package manager, you can find more information about Helm here: https://helm.sh/

- hostpath-storage: This add-on creates a default storage class with allocates storage from a host directory, you can find more info here: https://microk8s.io/docs/addon-hostpath-storage

- metallb: Deploys MetalLb Load Balancer, this will allow the exposure of different services to specific ip addresses on your network, you can find more info here: https://microk8s.io/docs/addon-metallb

- observabilty: Deploys Prometheus that is an open source system monitoring and alerting, you can find more information here: https://prometheus.io/docs/introduction/overview/

- registry: Deploys a private image registry and exposes it on localhost:32000

In order to install all the add-ons you should issue the following command:

microk8s enable dns dashboard helm3 hostpath-storage metallb observability registry

Configuration of Observability and Access to the Monitoring System

Once all the services are enabled/installed, then you have to expose and forward the Grafana port to your network, this can be done using the ClusterIP Method with External IP using the following command:

microk8s kubectl -n observability expose service kube-prom-stack-grafana --external-ip="<Host IP>" --port=3000 --target-port=3000 --name=kubernetes-prometheus-grafana

Where “port” is the port of the service in the Host and “target-port” is the port of the service in the Pod, knowing this then the command will expose Grafana to the external IP of the host (Ubuntu server) to the port 3000. Observability is a monitoring system for kubernetes, and you can access the portal using the url: http://<Host IP>:3000/

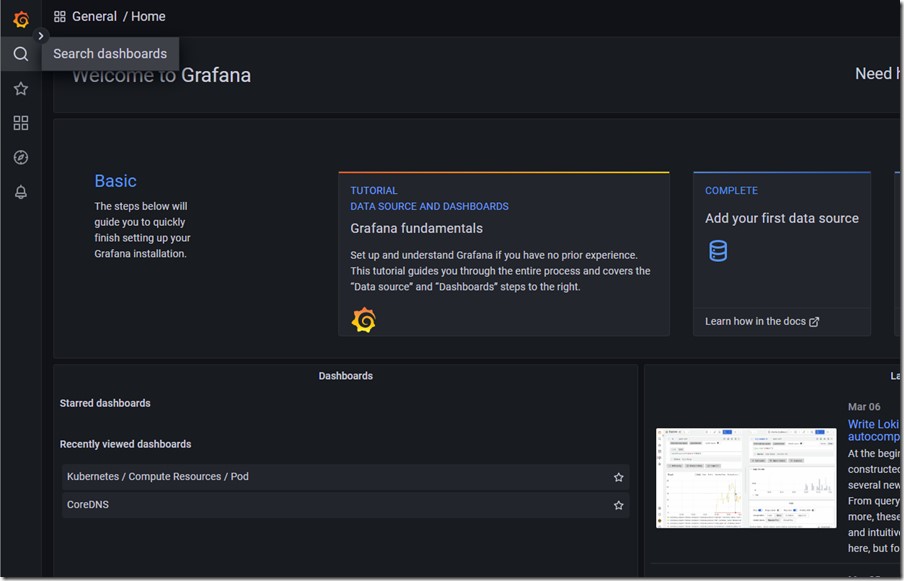

Once you reach the Login page enter the credentials: admin/prom-operator, and you will be granted access to the monitoring system. To see the predefined dashboards created you have to go to the toolbar on the left and click in “Search dashboards” like is shown in the next image

In the next window you need to expand the “General” section and the dashboard will be presented, here you need to choose one of those dashboards to see the data collected, like in the example dashboard below.

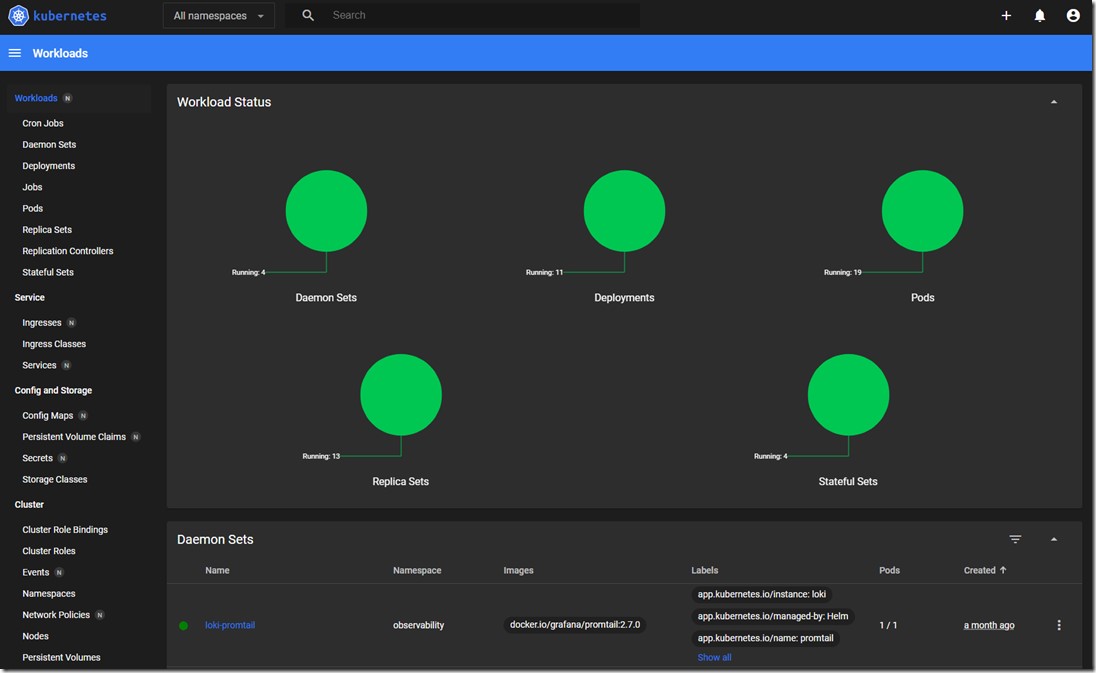

How to access the Kubernetes Dashboard

You can also access the default Kubernetes Dashboard, with this dashboard you can not only monitor the resources used by your pods, and services, but you can also deploy pods and services. To do this, you first need to expose the service with the ClusterIP method with External IP, with port 443 and target port 8443, with the command:

microk8s kubectl -n kube-system expose service kubernetes-dashboard --external-ip="<Host IP>" --port=443 --target-port=8443 --name=kubernetes-dashboard-https

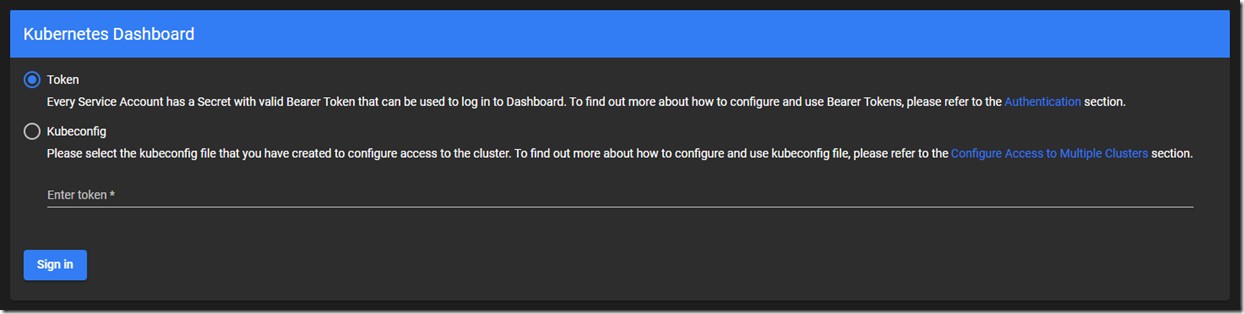

With the previous command you can access the Kubernetes Dashboard using the url: http://<Host IP>/, once connected you will see the following screen:

In order to authenticate your session you need a server generated a token, the token can be retrieved using command:

microk8s kubectl create token default

This command will generate the token that you will copy and paste into the web browser to authenticate your session and gain access to your dashboard

What is the Private Image Registry?

Before going into what a private registry is and how to configure it we need to explain Container Images. A container Image is basically a file with executable code that can create a container. A registry is a repository where all these images are stored, the registries can be public or private depending on who has access to them. In private registries the people that have access to them can create their own images from different base images and store them in the private registry. Every time that a new container needs to be launched with that specific image the container software checks if the image is already present in the system or not and if present then it checks for updates then creates the container.

The steps to build images and how to push them to a registry is beyond out of scope of this article, but the process differs depending an what type of container solution you are using, Docker, LXD, etc.

Microk8s has a private registry that we installed as an add-on and this registry creates a POD with the software listening in port 5000, but the implementation exposes the service using NodePort to the port 32000, so every time that you connect to the registry you will use <host-ip>:32000.

Note: It is really important to understand that this private registry is not secure and shouldn’t be used as is in a production environment

Conclusion

Microk8s is a really easy to deploy and install and gives you the ability to run Kubernetes in a production environment without needing of an exorbitant amount of resources.